VR-Vantage Capabilities

VR-Vantage is the visual component of the MAK ONE architecture. Its rendering engine generates real-time full-motion video of the synthetic environment including the terrain, dynamic oceans, the weather and atmosphere, along with all the entities being simulated by any simulator on the distributed simulation network.

Learn all about VR-Vantage by clicking through the tabs below or download the VR-Vantage Capabilities document.

Visualize from Every Vantage Point

VR-Vantage can be used in several contexts within a system architecture:

- VR-Vantage can be used as a 3D battlefield information station where you can view the virtual world in 2D and 3D. Whether you need it for situational awareness, simulation debugging, or after action review, VR-Vantage provides the most data about your networked virtual world and presents it in a clear and accessible way. With VR-Vantage, you can quickly achieve a “big picture” understanding of a battlefield situation while retaining an immersive sense of perspective.

- VR-Vantage is a software Image Generator (IG) for out-the-window (OTW) scenes, camera views, and sensor channels. Its built-in distributed rendering architecture supports many different display configurations — from simple desktop deployments to multichannel displays for virtual cockpits and training systems.

- The VR-Vantage Toolkit is an API that comes with all the software you need to completely rebuild visual applications like VR-Vantage and also allows you to customize and extend these applications to fit your unique requirements. The toolkit also gives you the power to embed any of VR-Vantage’s capabilities directly into your simulation applications. The VR-Vantage Toolkit is based on the MAK ONE modular architecture, so you can leverage value-added plug-ins built by the OSG community and MAK partners.

Key Features

Connectivity

- CIGI

- DIS

- HLA

Renderer

- Multi-channel

- Distortion correction

Simulated Environmental Effects

- Dynamic weather: thunderstorms, rain, snow, clouds

- Dynamic ocean: waves, surf, wakes, shallow water, and buoyancy (responds to dynamic weather)

- Particle-based recirculation and downwash effects

- Rotor wash

- Particle-based weather systems

- Procedural ground textures

- Realistic and dense vegetation

Terrain

- VR-World Whole-Earth

- CDB, OpenFlight, and many other formats

- Dynamic terrain: damage on buildings and bridges

- Terrain blending options

Simulated Models

- Realistic lifeforms: animated 3D human characters and animals with cultural diversity

- Extensive library of high-quality air, land, and maritime moving entity models

- Dynamic structures: damage to buildings and bridges

Sensors

- Optical Sensor Models (EO/IR/NVG) included

- Optical Sensor Physics (EO/IR/NVG) with SensorFX Plug-in

2D Overlays

- Heads-up display (HUD) support

System / Performance

- Open Architecture

- Commercial-off-the-shelf (COTS) Software

- Scalable/manageable performance

- Supports AR / VR / MR

Visualization Features

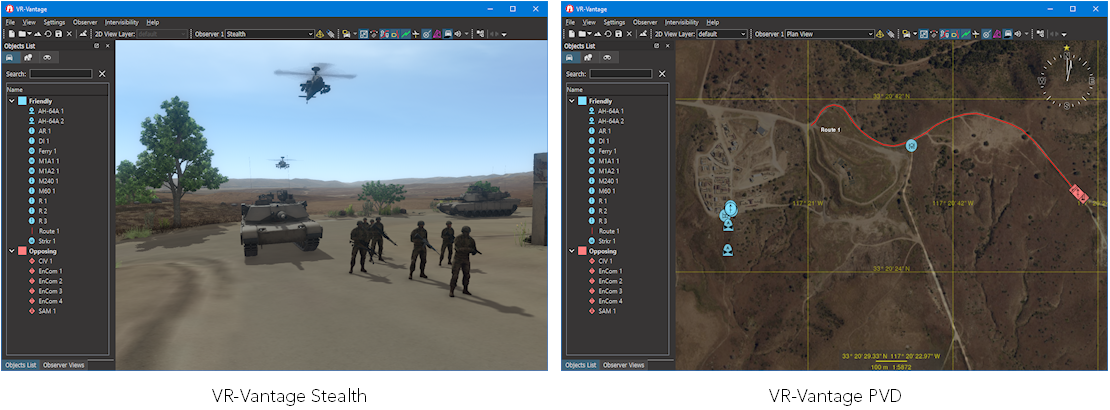

VR-Vantage delivers a realistic, three-dimensional view of terrain, vehicles, and other objects. VR-Vantage’s navigation controls give you complete freedom to travel anywhere as an unobtrusive observer. You can place the observer at a specific location, or link it to a vehicle for automatic tracking of battlefield activities. While an exercise is underway, you can quickly switch attach modes to see the scene from any perspective. VR-Vantage can be visible to other applications or invisible, at your discretion.

VR-Vantage also provides a two-dimensional plan view style view of the terrain to give you the big picture of a simulation. Terrains can include multiple layers to display in the 2D plan view, which you can then select using the 2D View Layers. This enables you to define different maps while still allowing you to see a realistic terrain in the stealth view.

Visualizing Terrain

Simulations must exist in the context of a simulated world. VR-Vantage applications support multiple terrain formats and allow you to combine elevation data, imagery, and feature data to support your simulated environment.

Terrain Agility and Composability

VR-Vantage allows you to build your terrain at runtime using a variety of database and vector formats. VR-Vantage supports the following broad types of terrain:

- Terrain models. Static terrains, such as OpenFlight, built using terrain construction applications.

- Paging terrain. Large area terrains that page in multiple terrain pages, such as MetaFlight.

- Direct from source. Terrains composed by combining various types of terrain elevation, imagery, and features data.

VR-Vantage can load OpenFlight, CDB, CTDB, FBX, and MetaFlight databases. It can load feature data from shapefiles. It can stream 3D tiles. You can also superimpose geospatial images such as GeoTiffs or other raster image files on the terrain. You can save these composed terrains in the VR-Vantage MTF file format.

- Open streaming terrain. Terrains that stream data from various public and private data sources, such as VR-TheWorld.

- Procedural terrain. Terrain created using geo-typical imagery based on soil type data.

- Dynamic terrain. VR-Vantage allows changes to OpenFlight models through HLA, DIS, and API calls to support a correlated and robust dynamic terrain environment.

Streaming and Paging Terrain

For simulation on large terrains, VR-Vantage can stream data from external servers or from local directories. VR-Vantage applications can stream elevation and imagery from terrain servers such as VR-TheWorld or other WMS-C (Web Mapping Service-Cached, from Open Geospatial Consortium) and TMS (OSGeo’s Tile Map Service) servers.

VR-Vantage uses osgEarth to import streaming terrain elevation and imagery data. (osgEarth is an open source plug-in to OpenSceneGraph, maintained by Pelican Mapping at http://osgEarth.org.) For MetaFlight, VR-Vantage has its own pager.

Procedural Terrain

A procedural terrain applies high-resolution geo-typical textures to the terrain based on soil-type information rather than using satellite imagery. This approach provides high-quality visuals over large areas without the performance cost of high-resolution imagery. Customers who use procedural terrain will usually insert locally accurate imagery and features for the areas that they are particularly interested in. You can procedurally add rooftop clutter, such as AC units, hatches, and other mechanical items, to extruded buildings.

Visualization of Feature Data

VR-Vantage Plan View can display the raw feature data of shape files (or VMAP) based on color-coded mappings. VR-Vantage can import S-57 data and display buoys and beacons. You can extrude buildings based on point feature data. Extruded buildings support multi-story texture diversity and complex rooftops. You can replace extruded buildings with specific OpenFlight models to produce more realistic scenes.

Vegetation

VR-Vantage uses SpeedTree software and content for animated real-time 3D foliage and vegetation. Speedtrees can move with the wind. SpeedTree is developed by Interactive Data Visualization (IDV) (https://www.speedtree.com).

Props

Props are terrain elements, such as buildings, light poles, and vegetation, that you can manipulate through the VR-Vantage GUI. VR-Vantage can import shape (or other feature data file formats) and create geometry (props) for point features in the source data.

Visualizing Entities

VR-Vantage includes many 3D vehicle models, some of which display movement of articulated parts, such as turrets and landing gear. These models can change to show a damaged or destroyed state. You can provide your own models and map them to entity types. (For more information, please see “3D Models, Terrain, and Graphical Content”.)

VR-Vantage can render real-time shadows for entities and lifeforms based on the position of the sun or moon.

Realistic 3D Human Characters

VR-Vantage uses DI-Guy software and content for human character animation. VR-Vantage comes with DI-Guy functionality built in, and with a large set of DI-Guy characters, appearances, and animations. If a vehicle has interior geometry, VR-Vantage can automatically put a human character in the driver’s seat.

2D Icons

In Plan View mode, VR-Vantage displays entities using MIL-STD 2525B icons. By default, 2D icons scale based on the observer's altitude, appearing smaller from greater heights. You can also adjust the size of icons to declutter the display.

Cockpits and Instrument Panels

VR-Vantage uses GL Studio software and content to render interactive cockpit instrumentation displays. VR-Vantage is delivered with several generic cockpit displays. It also includes individual instrument displays that you can move around the window and arrange as you desire. GL Studio is developed by DiSTI (https://www.disti.com).

Trajectory Smoothing

VR-Vantage can smooth the trajectories of moving vehicles to compensate for discontinuous positional data.

Surface Entity Movement

When dynamic ocean is enabled, surface entities bob up and down with ocean swells. Destroyed entities sink beneath the waves.

Ground Clamping

The ground clamping feature can ensure that all ground entities are placed correctly on the terrain surface.

Inset Views

You can display inset views of individual entities. The illustration shows a helicopter hovering one area of the terrain with an inset view of a vehicle driving through the town.

You can change the observer position and orientation in an inset view using keyboard controls. You can also edit window and channel properties for the window in the Display Engine Configuration Editor.

Sensor Views

VR-Vantage can display the view from gimbaled visual sensors simulated by VR-Forces, such as a camera on a UAV. The view is displayed in an inset window that has information about the observer mode and area being viewed. The window has its own observer and you can change the observer mode in the view.

Sound Effects

Sound effects increase the immersive effect of the visual environment. VR-Vantage can associate sound effects with entities, fire events, and detonation events. You can enable and disable the use of sound effects and set a variety of parameters such as the number of voices, speed of sound, and low-pass filtering.

Visualizing the Environment

VR-Vantage creates a realistic atmospheric environment with sun, moon, clouds, lighting, and precipitation. For the marine environment, VR-Vantage renders realistic ocean effects, including waves, swells, wakes, and spray effects. Users can choose among several pre-configured environment conditions or adjust any of the weather and marine features to create custom weather conditions. VR-Vantage can display weather conditions sent from VR-Forces.

VR-Vantage uses SilverLining software and content to compute lighting and to render the atmosphere. SilverLining is developed by Sundog Software (https://www.sundog-soft.com). VR-Vantage uses the Triton SDK, also from Sundog Software, to create the marine environment.

Configurable Weather

VR-Vantage lets you set the wind direction and speed, precipitation type and intensity, visibility, and cloud cover. Wind direction and speed affect dynamic ocean. Together with the lighting effects, varying cloud cover creates dramatic environment visualization.

VR-Vantage supports a wide variety of environment conditions including fog, dust and sand storms. For precipitation, it can visualize rain, snow, sleet, and hail. Rain can accumulate in puddles and snow can accumulate and blow in the wind.

You can render the effect of rain splashing on the observer’s camera. When dynamic ocean is enabled, waves can splash onto the observer view.

|

|

Animated Flags and Windsocks

Flags on terrain and ships, and also windsocks, respond to wind speed and direction.

Dynamic Ocean

VR-Vantage supports a variety of dynamic ocean effects, including:

- Douglas Sea State. This includes wind waves (wind sea), swell character, and the directions of each. VR-Vantage allows each to be separately configured.

- Wave chop.

- Surface transparency. The ability to see through the water from above sea level.

- Underwater visibility. The ability to see underwater.

- Swell.

- Surge depth. Lets you calm shallow water to visualize offshore wind and calm harbors.

Lighting

Date and Time

VR-Vantage uses a full-year ephemeris model that changes the position of the sun and moon as a function of date and time of day. You can set the date and advance time in real time or at a faster or slower rate. VR-Vantage can also advance time based on messages from VR-Forces.

Lighting Effects

VR-Vantage supports the following lighting effects:

- Dynamic lighting (illustrated).

- High dynamic range lighting.

- Forward+ lighting.

- Ocean planar reflection.

- Lens flare.

- Crepuscular (sun) rays.

- Atmospheric scattering.

- Shadows.

- Fresnel lighting for scene reflections.

- HBAO+ (Horizon Based Ambient Occlusion) from NVIDIA

VR-Vantage supports light lobes as defined in an OpenFlight model. The light can be cast onto the terrain, and the lights can rotate or move like a searchlight or a lighthouse. Because cast lights are computationally expensive, VR-Vantage only displays the N closest lights to the observer, where N can be configured.

VR-Vantage also supports light points. These are single points that light up in the dark, but do not cast light on objects near it. For example, light points could be used for airport or sea channel navigation. Lights can be colored, can blink, and can be directional.

Shadows

VR-Vantage can display shadows for entities, props, and terrain features. You can enable and disable them and configure many aspects of shadow quality.

Shader-Based Effects Texture Maps

VR-Vantage supports several types of shader-based effects texture maps. Texture maps are raster images that apply highly realistic textures to the terrain and models. By applying different types of texture maps to terrain and models, you can improve the visual quality of your simulation without the overhead of high polygon counts.

VR-Vantage supports the following types of shader-based effects maps:

- Normal, or bump, maps. Normal maps give terrains the appearance of relief, such as a rocky landscape.

- Specular maps. Specular maps affect the highlight color of objects.

- Ambient occlusion maps. Ambient occlusion maps model areas that do not receive direct light, such as cracks and crevices and shaded areas of terrain and models. These areas are lit only by ambient light.

- Reflection maps. Reflection maps affect the reflectivity of surfaces, such as windows. Reflection maps reflect objects in the sky, not the terrain.

- Emissive maps. Emissive maps control the emissivity of whatever they are applied to based on the current ambient light values.

- Nightmaps maps. Used when ambient light is low. Used in conjunction with emissive maps to control brightness of pixels.

- Emissive Nightmap maps. Used when ambient light is low. Pixels are 100% bright. You do not need an emissive map, but you cannot control pixel brightness.

- Lightmap maps. Lightmaps store precomputed lighting for surfaces in a scene.

- Sensor maps. Adds an intensity to all three color channels to support night vision goggles and infrared.

- Gloss maps. Gloss maps control how wide or narrow the specular highlight appears.

- Reflection maps. Reflects the environment onto an object.

- Flow maps. Flow maps define direction-based distortion, such as water flow.

- Snow Mask maps. Controls how quickly snow accumulates and to what depth.

- Rain Mask effect maps. Controls whether an area will have puddles and the amount of accumulation before puddles appear.

Depth of Field

Depth of field controls the area in the scene that is in focus. Depth of field is calculated based on a focal depth and a focal range. In the image below, the focal depth is about 10 meters, fairly close to the observer, and the focal range is also small, so that the scene is out of focus just past the car.

Visualizing Entity Effects and Interactions

VR-Vantage displays:

- Smoking and flaming effects for damaged entities.

- Trailing effects, such as footprints, wakes, missile trails, and dust clouds.

- Muzzle flashes.

- Tactical smoke.

- Smoke and flame for detonation impacts.

The Particle System Editor gives you greater control over special effects such as smoke, fire, explosions, debris, dust trails, and weather effects.

Optical Sensors

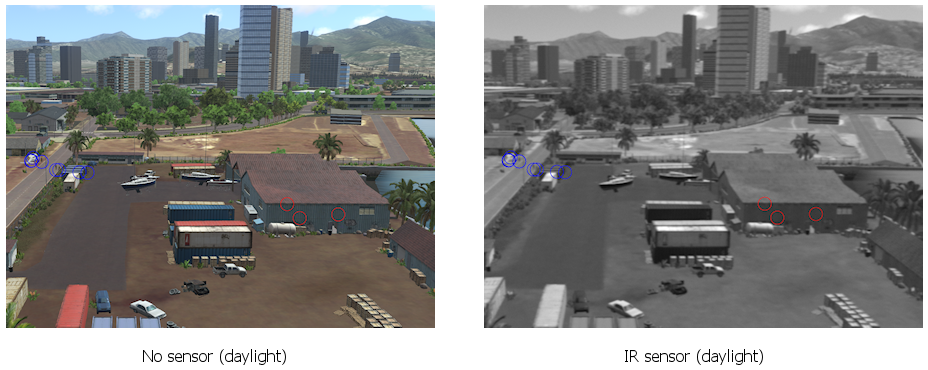

VR-Vantage offers two options for rendering optical sensors: CameraFX and SensorFX.

CameraFX

VR-Vantage can simulate the way that the 3D scene would look if an observer were using visual sensor devices such as night vision goggles or viewing the infrared spectrum. This is an observer-specific setting. You can adjust the contrast, blur, and noise of the view.

VR-Vantage includes a sensor module, CameraFX, which uses the SenSim libraries from JRM Technologies. The sensors do not take into account the materials of the objects that you are viewing. They simply filter the view to produce the desired effect. If you want physics-based sensor effects, you can add the SensorFX plug-in to VR-Vantage.

SensorFX

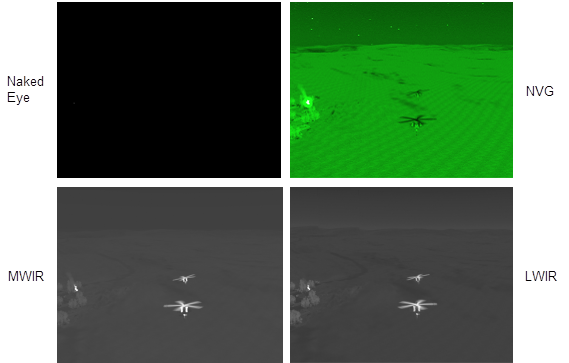

SensorFX is an extra cost plug-in for VR-Vantage. SensorFX changes VR-Vantage from a visual scene generator to a sensor scene generator. SensorFX models the physics of light energy as it is reflected and emitted from surfaces in the scene and as it is transmitted through the atmosphere and into a sensing device. SensorFX also models the collection and processing properties of the sensing device to render an accurate electro-optical (EO), night vision or infrared (IR) scene.

Extensive Sensor Coverage — SensorFX enables you to credibly simulate any sensor in the 0.3-16.0um band with VR-Vantage, including:

- FLIRs / Thermal Imagers: 3-5 & 8-12um.

- Image Intensifiers / NVGs: 2nd & 3rd Gen.

- EO Cameras: Color CCD, LLTV, BW, SWIR.

Analysis

VR-Vantage is more than just an IG. It has many features that provide information about the simulation being observed.

Entity Information

VR-Vantage provides detailed information about the participants in a simulation.

Entity Labels

In the 3D projection, you can display entity information on translucent entity labels. You can easily display and hide the labels or pin them to the display. In the 2D projection, entity information is displayed adjacent to entity icons. You can quickly change the information that is displayed to maintain a balance of available information and screen clarity.

Track Histories

Track histories allow you to track the paths of entities during an exercise, including the path of munitions as they travel from the shooter to the target. When track histories are enabled, each entity leaves a track ribbon. The ribbon is colored based on the entity’s force by default, but you can change the color as desired.

Fire and Detonation Lines

When an entity fires a munition, a line is drawn between the entity and the location of the detonation. The line is colored blue, red, or green for the force of the shooter.

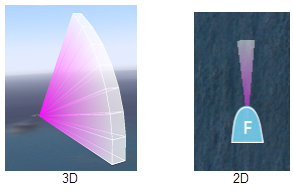

Emitter Volumes

VR-Vantage can display emitter volumes for entities that have emitter systems (Figure 4). You can configure the colors used to represent emitter frequencies and you can configure the size of the emitter volumes.

XR Mode

XR mode uses the XR model set to enhance your ability to understand the layout of forces in the 3D projection. The notional icons are scaled to be visible regardless of the observer’s distance from the entities. You can resize the icons to ensure that you have the right view for your needs.

VR-Vantage also supports scaling of the realistic 3D model set.

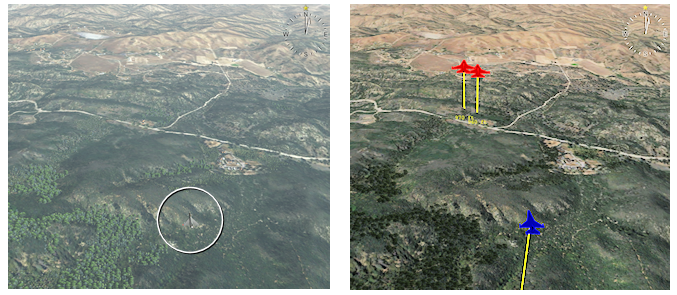

In the following illustration, in Stealth observer mode, the nearer fighter is barely visible and the distant fighters not visible at all. In XR mode, model scaling and colorized models show all entities. Height-above-terrain lines provide information about their relationship to the terrain.

Terrain scaling complements model scaling by helping to illustrate the relationship of models to the terrain from a great distance.

Tactical Graphics

VR-Vantage displays tactical graphics (routes, points, areas, and so on) published by VR-Forces. It also displays graphics sent using the Remote Graphics API.

Overlays

Tactical graphics and other visual data is displayed on overlays. You can enable and disable the display of graphics individually and by overlay.

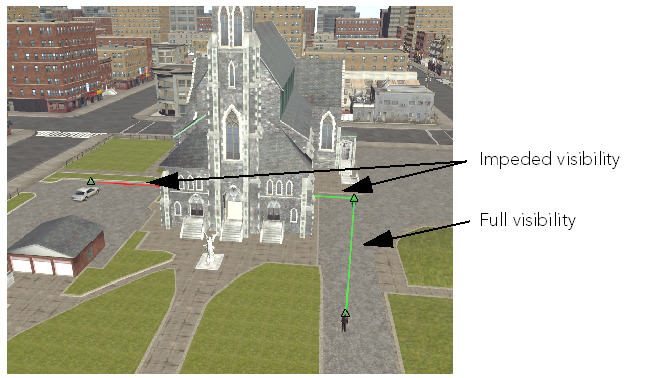

Intervisibility

You can display intervisibility (line-of-sight or LOS) between points, within areas, and between a point and all entities in a defined area. Intervisibility displays can be transient or permanent. Permanent intervisibility displays are objects. You can resize them and move them around the terrain. Intervisibility objects can be tied to a particular entity.

Terrain Details

VR-Vantage lets you visualize the terrain in ways that help you understand it better:

- Wireframe mode. View the scene in wireframe mode to help understand its structure.

- Feature data. View feature data in the 2D projection.

Connectivity

VR-Vantage can receive data from external simulations and be controlled by them using standard simulation protocols. You can connect and disconnect from simulations during runtime and can configure the connections in the graphical user interface (GUI).

Support for DIS, HLA, and CIGI

VR-Vantage supports the following simulation network protocols:

- HLA Evolved and HLA 4. VR-Vantage supports HLA Evolved (IEEE 1516-2010) and HLA 4 (IEEE 1516-2025). This includes built-in support for the HLA NETN FOM (an extension of RPR FOM 2.0) and can support other FOMs through the FOM Mapping feature.

- Distributed Interactive Simulation (DIS) protocols DIS 7.

- Common Image Generator Interface (CIGI) 3.2, 3.3, and 4.0. CIGI is an interface that allows an image generator (IG) to receive messages from a host to control what is drawn by the IG.

Streaming Video

VR-Vantage can send the view in the display window to a video stream. It supports several different open standards, including H.264 and H.265. If you have a supported viewer, you can use the simulated video as a flexible alternative to live video for demonstration, development, testing, and embedded training of operational video exploitation systems.

Support for Virtual Reality Head Mounted Displays

VR-Vantage is integrated with several virtual reality head-mounted displays (HMDs). VR-Vantage can render stereo views, which are presented inside the HMD. The head position and orientation tracking of the HMD are reflected in the observer as the wearer of the HMD moves their head.

VR-Vantage uses the OpenVR, Oculus SDK, Varjo SDK, and VRgineers libraries to integrate with virtual reality systems. Any system that is capable of using OpenVR should work with VR-Vantage. VR-Vantage is tested with the following systems:

- Oculus Rift

- HTC Vive

- Valve Index

- Varjo

- VRgineers XTAL

- Valve Index

- Windows Mixed Reality headsets

VR-Vantage Application Concepts

This section describes, at a high level, major functional capabilities of VR-Vantage applications. These core capabilities are more than just “features” (which are described later). They are integral to what a VR-Vantage application is and can do. The capabilities are:

- Multi-channel displays.

- The observer.

- Flexible, intuitive graphical user interface (GUI).

- Remote control.

- Configurable startup.

Multi-Channel Display

Edge-Blended Multi-Channel

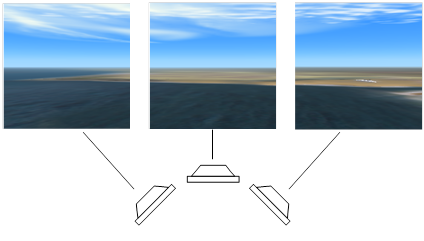

VR-Vantage is built from the ground up with multi-channel distributed rendering. This means that users can add VR-Vantage Remote Display Engines to extend the image generation to fill all the displays in their training devices. And when their display solutions involve curved screens, VR-Vantage’s built-in support for Scalable Display Technologies enables them to set up image warping and edge blending to match the specific geometry of the display.

Multiple Windows

VR-Vantage can display a simulation in multiple windows, with multiple channels. It can control the display of VR-Vantage displays on remote computers. This gives you the flexibility to create configurations such as wrap-around views for a cockpit simulation, multiple monitor wall displays (security camera displays), or multi-channel displays on large screens. You can create multiple channels in one screen. Each has its own observer and can be independently manipulated.

“Inset Views,” earlier in this page, also shows multiple windows on one screen.

VR-Vantage can support displays made up from multiple projectors using the Scalable Display Manager (SDM) from Scalable Display Technologies.

The following illustration shows how you could arrange multiple screens for a wrap-around display.

Window Types

To view a scene and simulation data, a display engine must have a window. VR-Vantage supports the following types of windows:

- Full screen. A full screen window uses the entire area of a monitor. It does not have any menus, panels, or other GUI controls.

- Resizable. A resizable window can be moved, resized, maximized, and minimized. It does not have any GUI controls. An inset view is an example of a resizable window.

- Embedded. An embedded window is part of an application. The main display window in VR-Vantage is an embedded window. The application provides a variety of controls that affect the view in the window. You could, if you wanted to, change VR-Vantage’s window to a different type, in which case it would no longer be embedded in the VR-Vantage window.

A window can have zero or more channels. Each channel defines a viewable area on the screen. You must have at least one channel to see a rendered image in the window. Each channel can have its own observer.

Support of Stereoscopic Displays

VR-Vantage supports anaglyphic stereo and polarized stereo.

The Observer

The observer, or eyepoint, is the location in the 3D or 2D environment from which you observe the scene. You can move the observer (navigate) through the scene. You can attach the observer to an entity or prop. When the observer is attached to an entity, it moves automatically with that entity. You can also control the observer from a remote application using view control messages.

You can have multiple observers in a VR-Vantage session. They might be associated with different windows. For example, you could have an observer for the main window and one for an inset window.

Intuitive Navigation Modes

Control the absolute position of the observer with the keyboard and mouse. Conveniences like speed scaling and terrain constraint make navigation easy. Or attach to various entities for a more focused view.

Multiple Attach Modes

VR-Vantage offers attach modes that let you:

- Manually control the observer’s position and orientation.

- Follow an entity, maintaining a consistent positional offset, with a matching heading.

- Mimic a vehicle’s position and orientation. You can place the observer inside the vehicle for a driver’s-eye view, or fly outside of it, moving in tandem with the vehicle.

- Tether the observer to an entity, maintaining a consistent positional offset without changing the observer’s heading.

- Automatically track a vehicle’s movement from a fixed viewpoint, as if watching from a control tower.

Observer Zoom

The zoom feature lets you zoom in on an area of the terrain without moving the observer. It is as if the observer were using binoculars to get a closer look at an object.

Saved Views

VR-Vantage supports saving an observer’s view at any moment. Saved views can be loaded at startup or during runtime. You can jump quickly from one view to another or animate a smooth transition between them.

Flexible, Intuitive Graphical User Interface

VR-Vantage’s graphical user interface (GUI) allows you to manipulate VR-Vantage from menus, dockable control panels, and the keyboard.

You can hide and display the control panels, keep them docked to the screen, or undock them to make more space available for the display window. The GUI controls can be hidden for full-screen demonstrations.

The GUI follows standard conventions for modern windowing systems.

Persistent Settings

The VR-Vantage GUI remembers the settings as you change them. Settings can be restored back to factory defaults or back to the state they were in when the application was started. Most settings can be exported from one VR-Vantage application and imported into another.

Remote Control

You can control VR-Vantage remotely through view control messages, which can be generated by MAK applications such as VR-Forces, and by programs that use the VR-Vantage Control Toolkit.

Startup Options

On Windows, you can start VR-Vantage applications using any of the usual methods, including the Start menu, desktop icons, and taskbar icons. On both Windows and Linux, you can start VR-Vantage applications from the command line and using batch files or shell scripts. VR-Vantage has many command-line arguments that you can use to customize startup and quickly load preferred configurations.

Performance Features

VR-Vantage has features that help you understand its performance. It also has features that help you improve performance.

Image generation for immersive display systems requires smooth 60Hz update rates (and VR applications require 90Hz and up). Achieving this standard of performance while maximizing content density is a constant struggle. VR-Vantage helps users and system integrators manage this fundamental balance.

High-performance image generators, like VR-Vantage, use sophisticated graphics techniques to render beautiful full-motion scenes of the world. Many of these techniques come with a performance cost that can adversely affect frame update rates. VR-Vantage presents all these techniques to integrators, so that they can choose the techniques that most positively impact the rendered scenes for the specific type of their simulation. VR-Vantage also provides tools to help diagnose performance bottlenecks, which is key to addressing issues with content and configuration settings — a precursor to resolving performance problems.

VR-Vantage can display VR-Vantage performance statistics. The Function Profiler allows developers to profile a function or portion of a function and view on-screen results.

The StatsHandler class in the osgViewer library can gather and display the following rendering performance information.

- Frame rate. osgViewer displays the number of frames rendered per second (FPS).

- Traversal time. osgViewer displays the amount of time spent in each of the event, update, cull, and draw traversals, including a graphical display.

- Geometry information. osgViewer displays the number of rendered osg::Drawable objects, as well as the total number of vertices and primitives processed per frame.

File Caching

VR-Vantage saves loaded files into a fast loading format so that they are quickly loaded from disk the next time you need them. VR-Vantage also supports preemptive model loading -— you can specify a list of models to load at startup so that there are no stalls when the model is discovered during a simulation.

Object Instancing

VR-Vantage keeps instances of objects in memory and clones or references them instead of loading new copies of the same data from disk again.

Configurable Render Settings

VR-Vantage lets you decide which of the visual features you want to enable and disable, such as advanced lighting, wake and spray effects, shadows, and the ocean height map. This lets you optimize visual quality or performance depending on your needs.

Filtering Entities Using Interest Management

VR-Vantage can use interest management to improve its performance in simulations that have high entity counts. Interest management is an implementation of HLA data distribution management (DDM). When interest management is enabled, VR-Vantage filters out all entities that are more than a specified distance from the observer.

VR-Vantage Toolkit

The VR-Vantage Toolkit allows developers to design and deploy realtime 2D and 3D visual applications for the simulation and training domain. It is a cross-platform C++ API based on OpenSceneGraph (OSG). Through the VR-Vantage Toolkit, developers can extend existing VR-Vantage applications, create their own applications, or embed visuals into existing applications. The VR-Vantage Toolkit also gives you the power to embed any of the VR-Vantage GUI capabilities directly into your simulation applications. Because it is based on OpenSceneGraph, you can leverage value-added plug-ins built by the OSG community and MAK partners.

The VR-Vantage Control Toolkit lets developers send VR-Vantage control messages to VR-Vantage applications. Move the observer, change a setting, or even create graphics on a channel. It can all be done remotely through an HLA or DIS interface.

Technical Details

For hardware and operating system recommendations, please see https://www.mak.com/mak-one/support/help/hardware-recommendations.

Export Classification

VR-Vantage and all of its modules are classified as EAR 99 by the US Commerce Department.

VR-Vantage Product Licensing

Each VR-Vantage product is sold separately. Please contact your MAK salesperson for details. MAK license management uses FLEXlm. Licenses can be floating, node-locked, or dongle-based.

Localization

The VR-Vantage graphical user interface can be localized using the Qt Linguist tool, which is included with VR-Vantage.

Coordinate Systems

VR-Vantage supports the Geocentric, UTM, Lambert, Flat Earth, MGRS, and Cartesian coordinate systems.

VR-Vantage File Format Support

VR-Vantage can import elevation, terrain, images, models, and feature data. Some types of files can contain more than one type of information. For example GeoTiff files can have elevation data or just have image data. The following sections list the supported formats.

Note: In theory, VR-Vantage can load any format supported by osgEarth if you use the earth file format to load them. However, VR-Vantage does not include drivers for all of the formats supported by osgEarth. Furthermore, when using osgEarth, VR-Vantage only supports geocentric terrains. If you are uncertain about support for a particular format, please ask on the support portal (https://mak.com/support).

Terrain Data

VR-Vantage imports terrain data directly as terrain patches. It supports the following formats:

- MAK encrypted data format (.medf)

- OpenFlight (.flt)

- MetaFlight (.mft)

- Shape terrain data (.shp)

- TerraPage (.txp)

- CDB (using an earth file)

- FBX

VR-Vantage provides limited support for data in the following formats. If you have problems loading data in these formats, MAK will try to assist you, but cannot guarantee that it will be possible to use your data.

- 3DS

- OSG

Elevation Data

VR-Vantage supports the following types of elevation data:

- DTED (.dt0, .dt1, .dt2, .dted)

- GeoTIFF

- DEM

- Arc/Info Binary Data (.adf)

- Other formats supported by osgEarth

Supported Image Formats

You can import images in the following formats:

- GeoTIFF (.tif, .)

- JPEG (.jpg, .jpeg)

- PNG

- RGB

- Windows bitmap (.bmp)

- GIF

- ECW (Windows only)

- Other formats supported by osgEarth using the GDAL driver. For a list of formats supported by GDAL, go to https://gdal.org/ and select the Supported Formats link. If you have problems loading an image format that is not in this list, please check the support portal (https://mak.com/support). However, we cannot guarantee that unlisted formats are fully supported.

3D Formats

VR-Vantage supports 3D formats for vehicle models, buildings, and some terrain:

- OpenFlight (.flt)

- FBX

Third-Party Software and Content

VR-Vantage includes software and content licensed from third parties, including:

- SilverLining™. real-time sky, 3D cloud, and ocean rendering from Sundog Software.

- Triton Ocean SDK. 3D ocean and ship wakes from Sundog Software.

- GL Studio. interactive cockpit instrumentation and HMI from DiSTI.

- SpeedTree. animated, 3D foliage from Interactive Data Visualization (IDV).

- 3D models, terrain, and graphical content from Simthetiq, Simaction, TurboSquid, and TerraSim.

- OpenSceneGraph. an open source 3D graphics toolkit hosted at https://www.openscenegraph.org.

- osgEarth. an open source streaming terrain plug-in by Pelican Mapping, at http://osgEarth.org.

Please note: The run-time and developers' rights for VR-Vantage customers vary from vendor to vendor.

Silverlining and Triton (Environment)

VR-Vantage uses SilverLining software and content to compute lighting and to render the sky, clouds, sun, and moon. SilverLining is developed by Sundog Software (https://www.sundog-soft.com).

The Triton Ocean SDK, also from Sundog Software, is used for dynamic ocean effects. Most VR-Vantage customers do not need to buy a SilverLining or Triton license of any kind. In general, a VR-Vantage customer has the right to use the technology that is built into VR-Vantage in any VR-Vantage-based application (including custom applications built using the Toolkit).

GL Studio (Cockpits)

VR-Vantage uses GL Studio software and content to render interactive cockpit instrumentation displays. GL Studio is developed by DiSTI (https://www.disti.com).

VR-Vantage is delivered with several generic cockpit displays. You can use the built-in cockpit displays in any VR-Vantage-based application (including custom applications built using the Toolkit), without a GL Studio license of any kind.

SpeedTrees (Vegetation)

VR-Vantage uses SpeedTree software and content for animated real-time 3D foliage and vegetation. SpeedTree is developed by Interactive Data Visualization (IDV) (https://www.speedtree.com).

A VR-Vantage customer does not need a SpeedTree license of any kind to use the SpeedTree functionality that is built into the VR-Vantage applications that MAK delivers. You can build and run plug-ins to our out-of-the-box applications without a SpeedTree license, as long as your plug-ins do not need to directly access the SpeedTree API.

3D Models, Terrain, and Graphical Content

VR-Vantage includes a rich set of 3D models for vehicles, weapons, cultural features, and urban clutter (signs, barriers, lampposts, and so on). It also includes several sample terrain databases to help you get started with VR-Vantage, and to help demonstrate VR-Vantage. Much of this content is derived from 3D data that we have licensed from third parties:

- Many of the high-quality vehicle models come from Simthetiq (https://www.simthetiq.com).

- Some models come from Simaction (formerly RealDB) (http://simaction.com).

- Some of the middle-eastern building models and urban clutter objects that are used in our sample terrain databases were licensed from Turbosquid (https://www.turbosquid.com).

All of this content is distributed in the VR-Vantage package solely so that VR-Vantage can use it. Use of any of the VR-Vantage models, textures, terrains, or other content outside of VR-Vantage-based applications is not permitted by the VR-Vantage license.

OpenSceneGraph (3D Rendering)

VR-Vantage uses OpenSceneGraph for some of its underlying infrastructure. However, the scene is rendered using an advanced proprietary shader pipeline taking advantage of of many of the latest techniques of modern computer graphics. OpenSceneGraph is an open source, cross-platform graphics toolkit for the development of high-performance graphics applications. The OpenSceneGraph source repository is maintained by Robert Osfield at https://www.openscenegraph.org. It is distributed under the OpenSceneGraph Public License (OSGPL), which is based on the GNU Lesser General Public License (LGPL).

MAK will provide modified source code for OSG upon request, as per the OSGPL license. Please submit a request through our support portal (https://mak.com/support) to obtain the download links for our modified source.

osgEarth (Streaming Terrain)

VR-Vantage uses osgEarth to import streaming terrain elevation and imagery data. The data can be streamed from external servers and sources or from a directory on the computer running VR-Vantage. osgEarth is an open source plug-in to OpenSceneGraph, maintained by Pelican Mapping at http://osgEarth.org. osgEarth is distributed under the GNU Lesser General Public License (LGPL).

MAK will provide modified source code for osgEarth upon request, as per the OSGPL license. Please submit a request through our support portal (https://mak.com/support) to obtain the download links for our modified source.